AI models are more accessible than ever. You can spin up a capable language model in minutes, hook it into your application, and ship something impressive and fast. But at some point, every team hits a ceiling. The model is good, just not your good. Responses drift off-brand. Edge cases pile up. Costs balloon as you scale. And a bigger model doesn't always fix it.

This is where model customization becomes the most valuable skill in an AI developer's toolkit.

The Customization Spectrum

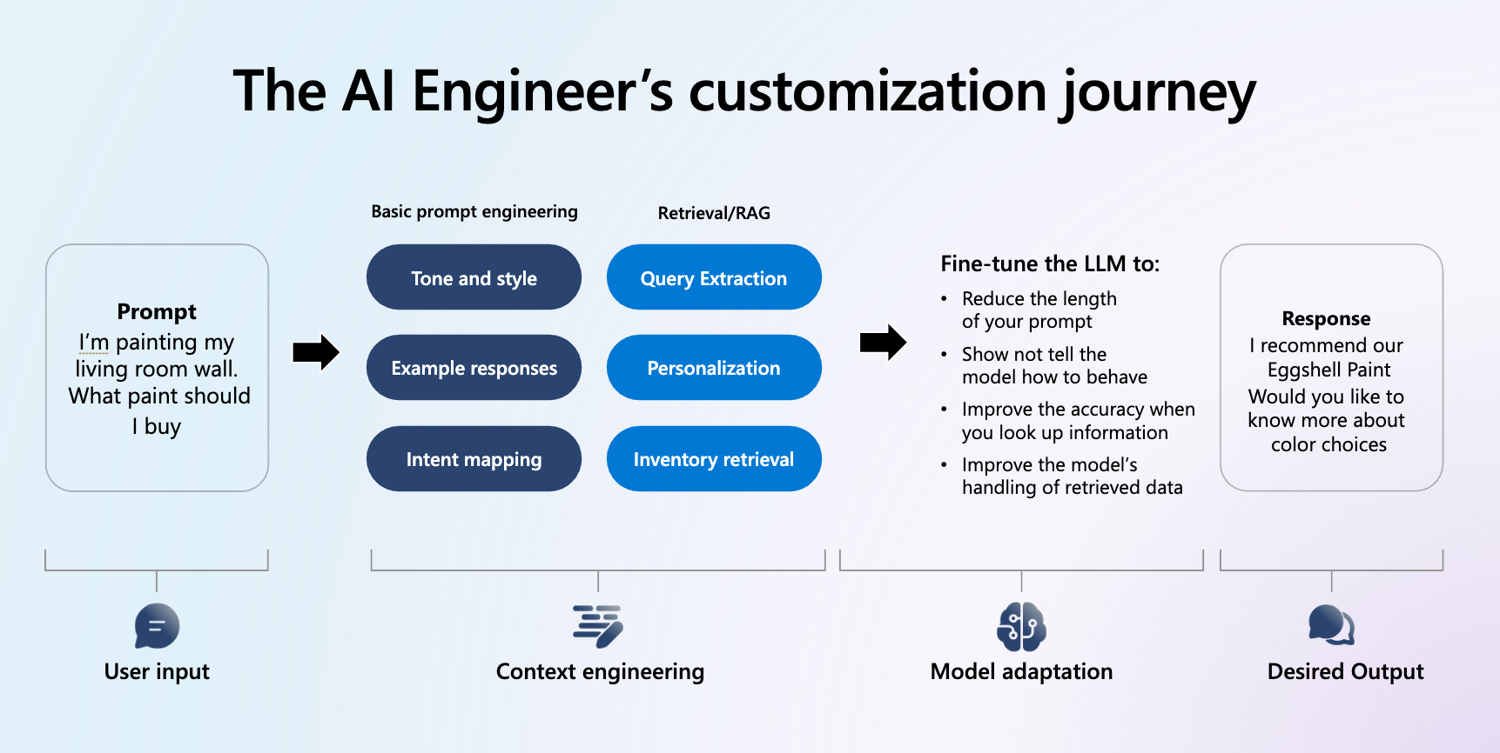

There's no single answer to "how do I make my model better." The right approach depends on your problem, your data, and your constraints. The options range from lightweight to deep:

- System prompts — Shape model behaviour with instructions at inference time.

- Few-shot examples — Show the model what good looks like inline.

- Retrieval-Augmented Generation (RAG) — Ground responses in your own data at runtime.

- Supervised Fine Tuning (SFT) — Train the model on your own examples so the behaviour is baked in, not bolted on.

- Distillation — Compress the capability of a large model into a smaller, cheaper one without significant accuracy loss.

- Reinforcement Fine Tuning (RFT) — Push reasoning models further using rewards-based training, ideal for complex multi-step tasks.

Understanding this spectrum — and knowing when to move along it — is what separates teams that prototype well from teams that ship well.

When Fine Tuning Is the Right Move

Fine tuning isn't always necessary, but when it is, it's a game-changer. It tends to be the right call when:

- You need consistent tone, format, or domain-specific behaviour that prompts alone can't reliably deliver

- You're making the same kinds of corrections repeatedly across many inference calls

- Latency and cost matter and you want to achieve the same quality with a smaller model

- You're building a multi-agent system where predictability at each step is critical

The key is recognising when you've exhausted the easier options and it's time to invest in training.

Microsoft Foundry: A Full Platform for Model Optimization

Microsoft Foundry brings the full customization stack into one place — from the playground for rapid experimentation, through to production-grade fine tuning pipelines with Azure OpenAI Service. It supports Distillation, Supervised Fine Tuning, and Supervised Fine Tuning, giving teams the tools to go as deep as their scenario demands.

Go Deeper: Two Upcoming Sessions

If this has sparked questions, we have two hands-on sessions next week that take you from concept to execution:

A Practical Guide to Model Training in Microsoft Foundry covers the full customization landscape, using a real retail scenario to ground the theory in practice. You'll leave with a clear framework for choosing the right approach and the confidence to run your first fine tuning job.

Fine Tuning Model Customization with Microsoft Foundry goes deeper, live demos of Distillation and Supervised Fine Tuning, with a focus on building AI that is accurate, cost-effective, and production-ready.

Attend one, or attend both. Either way, you'll leave with a sharper understanding of how to get more from your models.

Come ready to build at ECS 2026 in Cologne