That's a real story, and it's worth telling carefully. Because there's a version of it that reads like vendor marketing, and a version that reads like the most concrete public evidence yet that AI-assisted vulnerability discovery has crossed a line from "interesting demos" to "shipping in production."

What actually happened

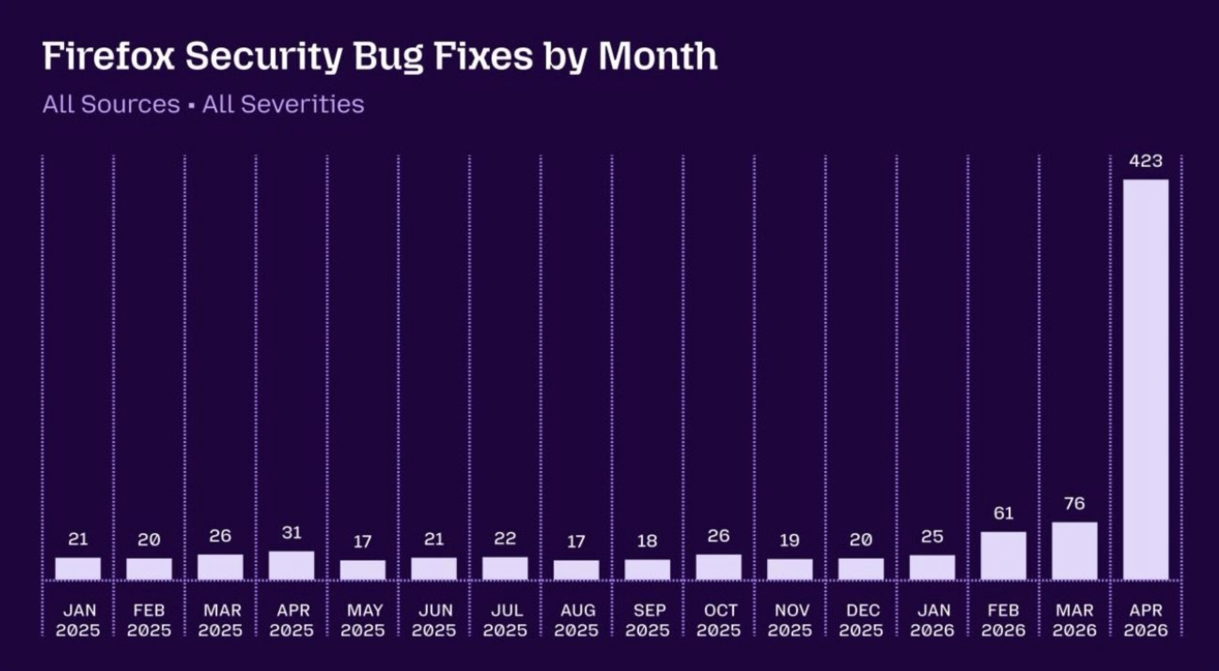

Mozilla and Anthropic ran two collaborations in sequence. The first, in February, used Claude Opus 4.6 against roughly 6,000 Firefox C++ files. It surfaced 22 security-sensitive bugs that landed in Firefox 148 — about a fifth of all 2025 high-severity Firefox CVEs, found in two weeks of automated work. That alone would have been a notable result.

The second run, in April, used Claude Mythos Preview — a frontier model Anthropic announced on 7 April under a programme called Project Glasswing. Firefox 150 shipped on 21 April with patches for 271 vulnerabilities found by Mythos in a single evaluation pass: 180 sec-high, 80 sec-moderate, 11 sec-low, per Mozilla's own technical write-up. Combined with internal pipeline finds (also largely AI-assisted) and external reports, the April total hit 423.

Some of those bugs had been sitting in the codebase for a very long time. A flaw in a basic HTML element that had gone unnoticed for fifteen years. A memory-safety issue in an older XML processing feature that had been there for two decades. The kind of thing elite human researchers could in principle find — Mozilla's engineers are careful to say the model didn't surface anything categorically beyond human reach — but in practice hadn't.

Why this is more than a vendor announcement

The instinct to discount this as marketing is reasonable. Anthropic released Mythos Preview to a small ring of partners, generated dramatic headline numbers, and didn't make the model generally available. Bruce Schneier called the rollout "very much a PR play." Security researcher Davi Ottenheimer showed that smaller, cheaper models inside a competent harness produced overlapping findings at a fraction of the cost. Both critiques have weight.

But two things distinguish the Firefox case from the usual hype cycle.

First, Mozilla published the data. The Mozilla Hacks engineering post-mortem links to specific Bugzilla entries. Mozilla's official advisory for the release lists every fix, including a few that group hundreds of related defects into single entries. The full picture is auditable if you take the time to dig in. Mozilla has no commercial stake in selling Mythos; that makes its disclosure the most credible piece of third-party evidence in the whole story.

Second, this isn't an isolated event. Google's Big Sleep agent caught a critical flaw in SQLite last summer before attackers could use it — Google says the exploit was known only to threat actors at the time. OpenAI's Aardvark, now part of its Codex Security product, has been credited with helping uncover thousands of high-severity issues across open source. Google's AI-augmented fuzzing tools dug up a memory-corruption bug in OpenSSL that had been hiding in the code for twenty years. The Firefox numbers are the loudest data point in a trend that's been building for eighteen months.

The harness matters as much as the model

The cleanest takeaway from Mozilla's write-up isn't "frontier models are smart now." It's that the system — model plus agentic harness plus deduplication plus validation — is what produced the result. The harness lets the model run test cases, prune false positives, and prioritise. Without it, a raw model produces noise. With it, a mid-tier model becomes useful and a frontier model becomes startling.

This matters for anyone building or buying security tooling. The model is a component, not the product. Teams that treat "we plugged in Claude/GPT/Gemini" as the work will get mediocre results. Teams that invest in the scaffolding — the test harness, the triage logic, the human-in-the-loop review process — will see compounding returns. Mozilla wrote every patch by hand, with a second engineer reviewing. AI accelerated discovery; it didn't replace remediation, and the engineering team didn't pretend otherwise.

The dual-use problem nobody has solved

Anthropic's stated reason for keeping Mythos restricted is candid: the same capabilities that help defenders patch faster help attackers exploit faster. So Glasswing went out to roughly a dozen launch partners (AWS, Apple, Cisco, CrowdStrike, Google, JPMorganChase, Microsoft, NVIDIA, Palo Alto Networks, the Linux Foundation, and Anthropic itself) plus around forty additional critical-software organisations. OpenAI took a noticeably different approach — its Trusted Access for Cyber programme scales to thousands of verified individual defenders with identity checks and approved-use scoping.

Neither approach is obviously right. Anthropic's containment is tighter but narrower; OpenAI's reach is broader but harder to police. And the day Glasswing launched, an unauthorised group reportedly accessed Mythos Preview by guessing a model URL through a third-party vendor environment. So whatever head start defenders are getting, it's likely to be measured in days or weeks, not months. The European Commission has publicly endorsed the staged-release philosophy; how that interacts with the EU AI Act's August 2026 obligations will be one of the more consequential regulatory questions of the year.

What it means for the rest of us

If you run security for an organisation that isn't on the partner list of a frontier lab, the practical question is what changes for you in the next twelve months. A few things look reasonably durable:

- Disclosure cadences from major open-source projects will get noisier before they get quieter. Expect more bundled advisories and more "we patched 100+ issues this release" announcements. The Firefox pattern is likely a preview, not an outlier.

- Triage capacity becomes the bottleneck. If AI can surface 271 issues at once, your patching, review, and rollout process needs to absorb that without melting down. This is now an organisational problem more than a technical one.

- Older code is no longer quietly safe. Two-decade-old vulnerabilities are being found in well-audited projects. Anything you've shipped and haven't reviewed in years is fair game.

- The defender-attacker balance is shifting, but slowly and unevenly. Better tools cut both ways, and gated-release models eventually leak. Plan for both sides of the curve.

The Firefox story is, more than anything, a useful reality check. It's not the singularity, and it's not just hype. It's a well-documented case of a class of tools graduating from research demos to production work — with all the messy operational consequences that come with that transition. The European tech community has a particular stake in getting the framing right: too much noise, and we mistake marketing for capability; too much scepticism, and we under-prepare. Somewhere between those extremes — argued out at conferences, on stage and in hallway conversations — is where most of us in the ecosystem will spend the next year figuring out what to actually do about it.