Most enterprises don't have a data shortage. They have a data findability shortage. The asset you need for that AI agent almost certainly exists somewhere in the tenant — but tracking it down, confirming it's trusted, and wiring it into a workflow has historically meant bouncing between products and copying IDs around.

Microsoft's latest move tackles exactly that seam. As of this week, the OneLake catalog is generally available natively inside Foundry, meaning AI builders can browse, evaluate, and ground agents on governed Fabric data without leaving the tool they're already in.

What's actually shipping

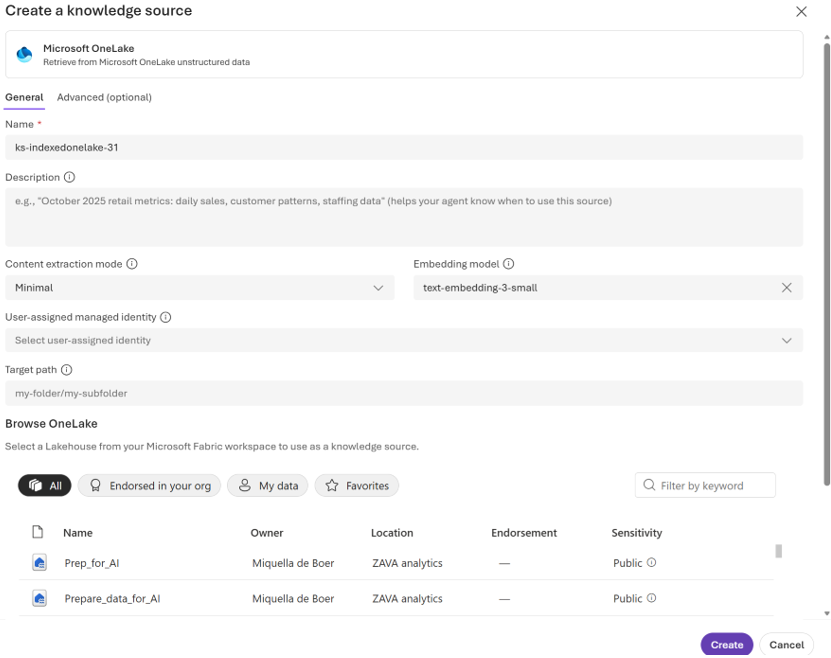

Announced by Miquella de Boer, Principal Product Manager Lead, the integration brings the OneLake catalog directly into the Foundry experience. From inside a Foundry project, you can now open the Knowledge section, pick Microsoft OneLake as a knowledge type, and browse the catalog in place. You select an asset, and it becomes a knowledge source for your agent.

The friction this removes is small but real. Previously, connecting Fabric data to a Foundry-built agent meant retrieving paths and identifiers manually and reconstructing context the platform already had. Now discovery happens first, technical setup second.

Why the seam matters

OneLake has been positioned as Microsoft's unified data foundation across Fabric since launch. Foundry, meanwhile, is where Microsoft's AI builders increasingly live — building agents, defining knowledge bases, orchestrating tool calls. Until now, those two worlds connected, but not natively.

Bringing the catalog into Foundry is less about a feature and more about a direction of travel. Microsoft has been signalling for some time that Fabric and Foundry should feel like one continuous surface for anyone going from raw data to grounded AI. This release is a concrete step in that direction, and it's worth reading alongside the OneLake security GA that landed just before it.

Governed discovery, in context

The catalog experience inside Foundry isn't just a file picker. According to the announcement, users can evaluate signals like ownership, endorsement, sensitivity, and location before committing to a source. That's the part that matters for anyone responsible for what an agent is actually grounded on.

For architects, this is the difference between "an agent that hallucinates plausibly" and "an agent answering from data the organisation has already certified." The governance posture of OneLake — sensitivity labels, endorsements, ownership metadata — travels with the asset into the AI workflow, instead of being shed at the boundary.

What you need to try it

The prerequisites are modest, but worth checking before you start clicking:

- A lakehouse in Fabric. If you don't have one, Microsoft's docs walk through creating a lakehouse with OneLake.

- A Foundry project, with the New Foundry toggle switched on.

- An Azure AI Search service at Basic tier or higher, in the same tenant as your Fabric workspace, with a managed identity assigned.

Note the search service prerequisite — this isn't pure Fabric-to-Foundry plumbing. Azure AI Search sits underneath the indexing layer, which means there's a third resource (and its associated cost and role assignments) to plan for. Setting up the managed identity requires Owner, User Access Administrator, RBAC Administrator, or a custom role with the right write permissions, so factor that into your provisioning request.

The flow itself

Once the prerequisites are in place, the path is short. From a Foundry project, head to Build, then Knowledge. Pick your AI Search resource, choose Create a knowledge base, select Microsoft OneLake as the knowledge type, and connect. Browse the catalog, pick the item, create the knowledge base, and save.

What's notable isn't the click count — it's that you never leave Foundry to find or vet the data. The catalog renders in place, with the metadata you need to make a sensible choice.

The bigger pattern

If you've been watching the Fabric and Foundry roadmaps, this release fits a pattern: each iteration smooths out a previously manual handover between data and AI. OneLake security GA tightened the access model. Native catalog access takes another rough edge off. Expect more along this seam — knowledge sources, governance signals, and agent grounding are converging into something that should, eventually, feel like one product rather than two integrated ones.

For teams already invested in Fabric, this lowers the cost of building Foundry agents on enterprise data noticeably. For teams weighing Microsoft against alternative AI platforms, it sharpens the argument that the governance work you've already done in Fabric earns compounding returns in the AI layer.

The Fabric-and-Foundry convergence is one of the storylines we'll be tracking closely with the European data and AI community over the coming year — these are exactly the integrations that show up first in MVP demos and architect war stories before they hit anyone's slide deck.