Enterprise AI is sprawling faster than most security teams can map it. Agents spin up inside Copilot Studio, employees connect third-party models, MCP servers proliferate, and somewhere in the middle a CISO is being asked by the board what the actual exposure looks like. The honest answer, until recently, has usually been: "We're working on it."

Microsoft's Security Dashboard for AI, announced at Ignite 2025 in November, moved to public preview in February, and now generally available, is aimed squarely at that gap. It pulls posture and real-time risk signals from Microsoft Defender, Entra, and Purview into a single executive-and-practitioner view of AI agents, apps, and platforms. If your organisation already runs those products, there's no extra licensing — the dashboard sits at ai.security.microsoft.com or inside the Defender, Entra, and Purview portals.

What the dashboard actually consolidates

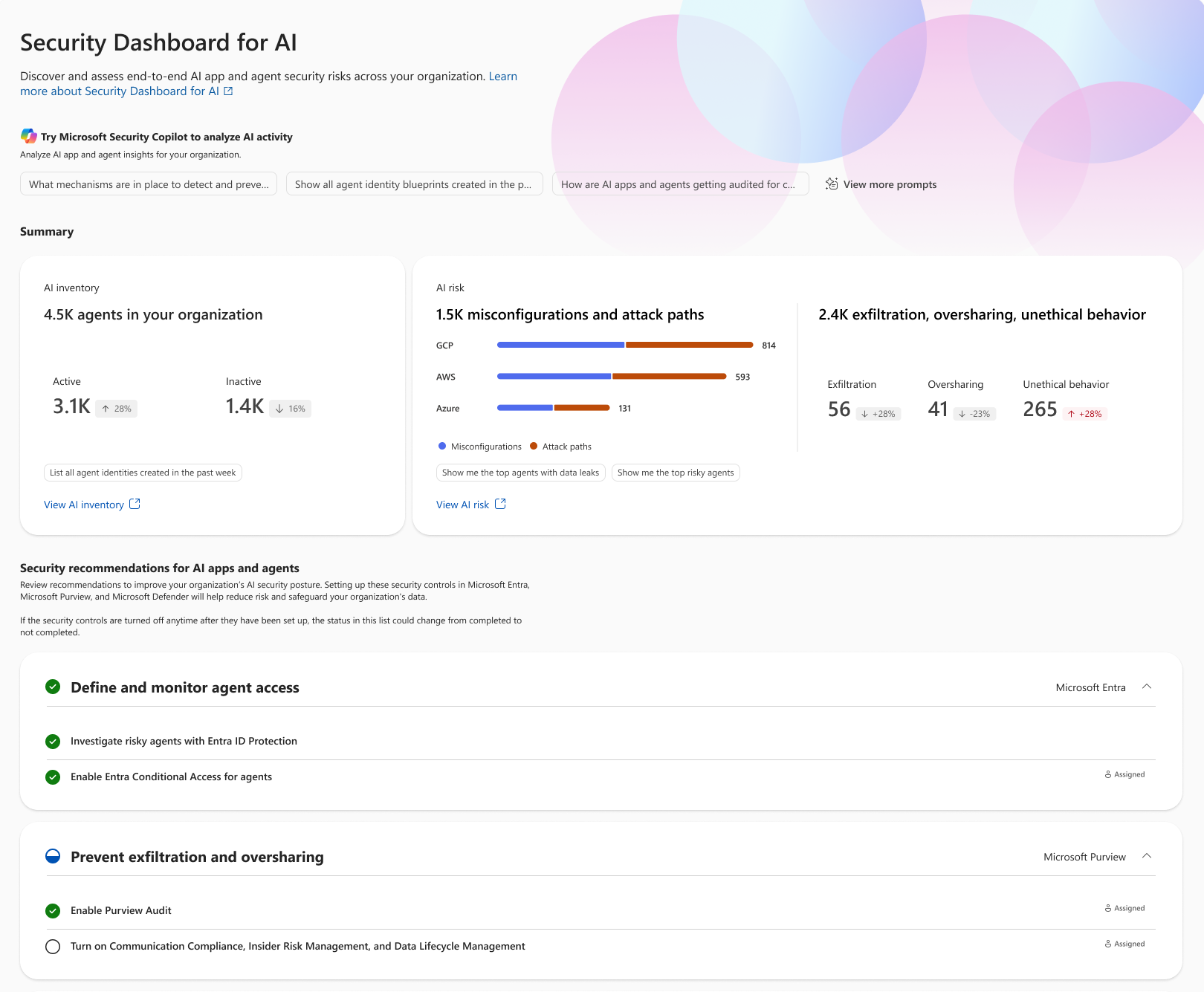

Three things, broadly. First, an AI inventory: agents, models, MCP servers, and applications, including Microsoft's own AI services — Microsoft 365 Copilot, Copilot Studio agents, Foundry apps and agents — alongside third-party services like Google Gemini and OpenAI ChatGPT. Second, a correlated risk view across identity, data, and threat protection — so a misconfigured agent isn't just a Defender alert and an Entra anomaly and a Purview policy hit in three different tabs. Third, a recommendation and delegation layer that surfaces remediation actions and lets administrators hand them off through Teams and Outlook.

Security Copilot is wired in for natural-language investigation. You can ask it to explore agent activity, surface unmanaged AI apps, or generate remediation steps tied to specific owners. None of this is magic — it's existing telemetry, mostly, surfaced in a context that finally makes sense for AI estates.

Why CISOs were asking for this

The numbers Microsoft cites in the article paint the picture clearly. Per a May 2025 PwC survey, more than 75% of enterprises are already adopting AI agents. At the same time, Nokod research finds over 80% of security teams report visibility gaps into the applications and AI agents being built within their own organisations. IDC's 2026 predictions go further: by 2027, four out of five organisations will face phishing attacks powered by AI-generated synthetic identities. And the tooling sprawl that's supposed to address all this isn't helping — Gartner's 2024 survey of 162 enterprises pegged the average organisation at 45 cybersecurity tools.

That's not surprising to anyone who's tried to get a coherent picture of agentic activity in a real tenant. The current model — one console for identity, another for data loss prevention, another for endpoint and cloud workload protection, and a spreadsheet somewhere tracking which business unit deployed which Copilot Studio agent — was never going to scale to autonomous agents that modify configurations and execute actions at machine speed.

Three ways to actually use it

Microsoft frames the dashboard around three patterns drawn from early-adopter CISOs. They're worth taking seriously, because the tool is only as useful as the operating rhythm you build around it.

The first is treating the dashboard as a daily AI risk radar. Open it each morning, scan the prioritised exposures — sorted by the severity reported by the underlying tools — and triage the most critical. Unmanaged assets, emerging risks, and critical alerts are surfaced in one place rather than scattered across portals.

The second is using it as a conversation driver with the security team. Because everyone is looking at the same Defender, Entra, and Purview signals, status meetings can shift from reconciling whose data is right to deciding what to do about it. Before a meeting, run prompts in Security Copilot to pull agent activity and posture recommendations; walk in with questions, not just dashboards.

The third is anchoring board-level discussions. AI risk is now firmly a board topic, and "we have visibility" is a much better story than "we're building visibility." The risk scorecard on the Overview tab gives leaders something concrete to point at, and the inventory answers the increasingly common question: what AI is actually running in our environment?

The honest caveats

Visibility is not control. A few independent analyses have made this point sharply, and it's worth absorbing. The dashboard leans on existing Microsoft telemetry — strong in cloud and hybrid Microsoft-heavy environments, thinner where agents run outside that perimeter. Behavioural baselines for autonomous agents degrade faster than for traditional workloads, and prompt chaining or subtle data exfiltration can look a lot like normal activity.

In other words: this is the operational scaffolding, not the finished building. Organisations still need governance processes, identity hardening for agents, DLP enforcement at model boundaries, and the cultural work that no dashboard solves. One independent analysis frames the broader journey in phases, moving from discovery and visibility through to automation, prevention, and continuous governance — the dashboard supports the early phases well and informs the rest.

Where to start

If you're already licensed for Defender, Entra, or Purview, there's no procurement conversation. The realistic first step is small: log in, look at what it has discovered, and see how that compares to what your teams think is running. Most organisations find a few surprises in the inventory — shadow agents, unmanaged apps, models someone connected to a tenant via a forgotten pilot. That gap, between assumed and actual AI footprint, is usually where the first week of value sits.

European organisations have a particular reason to care about getting this right early. Between the EU AI Act's phased obligations, evolving data protection guidance, and the appetite of national regulators for documented AI governance, "we couldn't see it" is becoming a harder defence each quarter. Tools like this one will be a recurring topic in our community at ECS, where the practical questions — how do you actually operate this across a federated estate, and what does good governance look like in practice? — tend to be where the most useful conversations happen.